Smart Medicine Pill Reminder Project is an IOT-based Electronics mini project for Academic Students, The main aim of developing this Kit is to remind medicines just like an alarm.

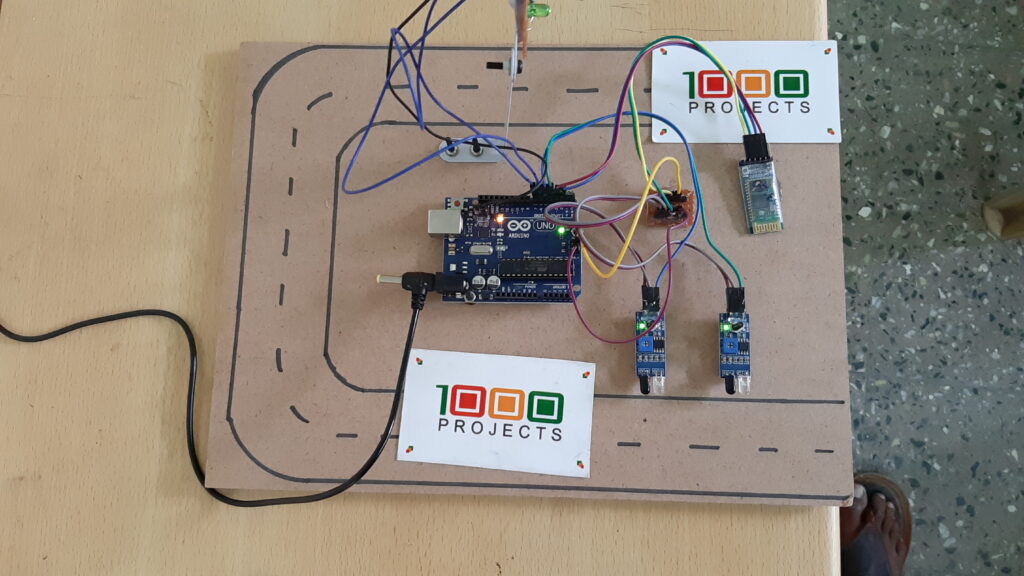

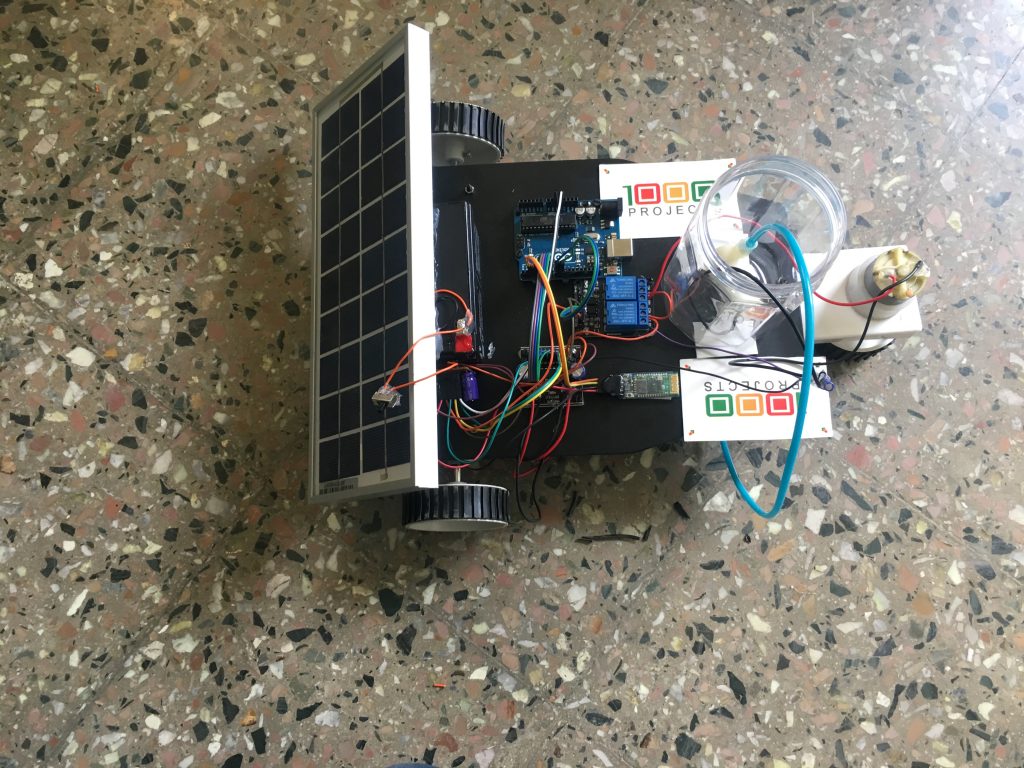

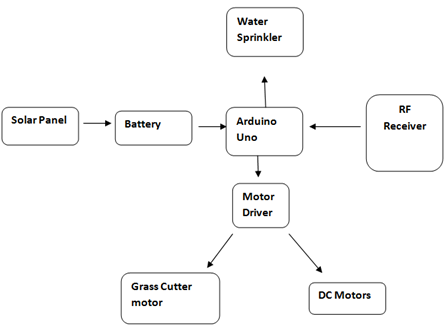

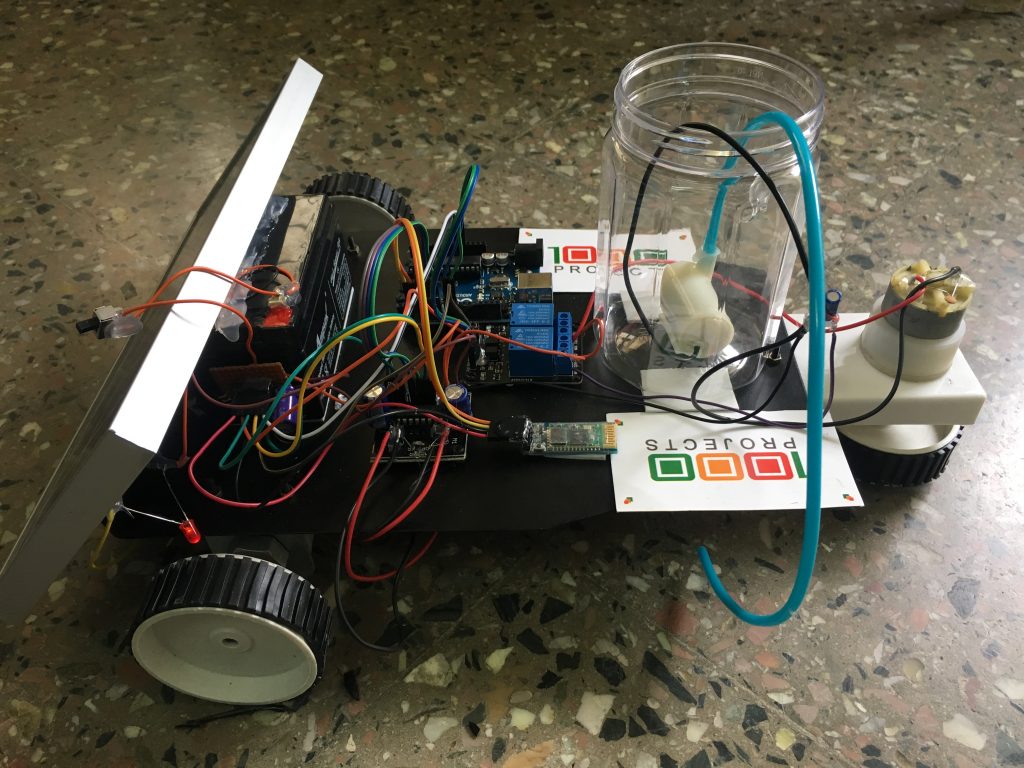

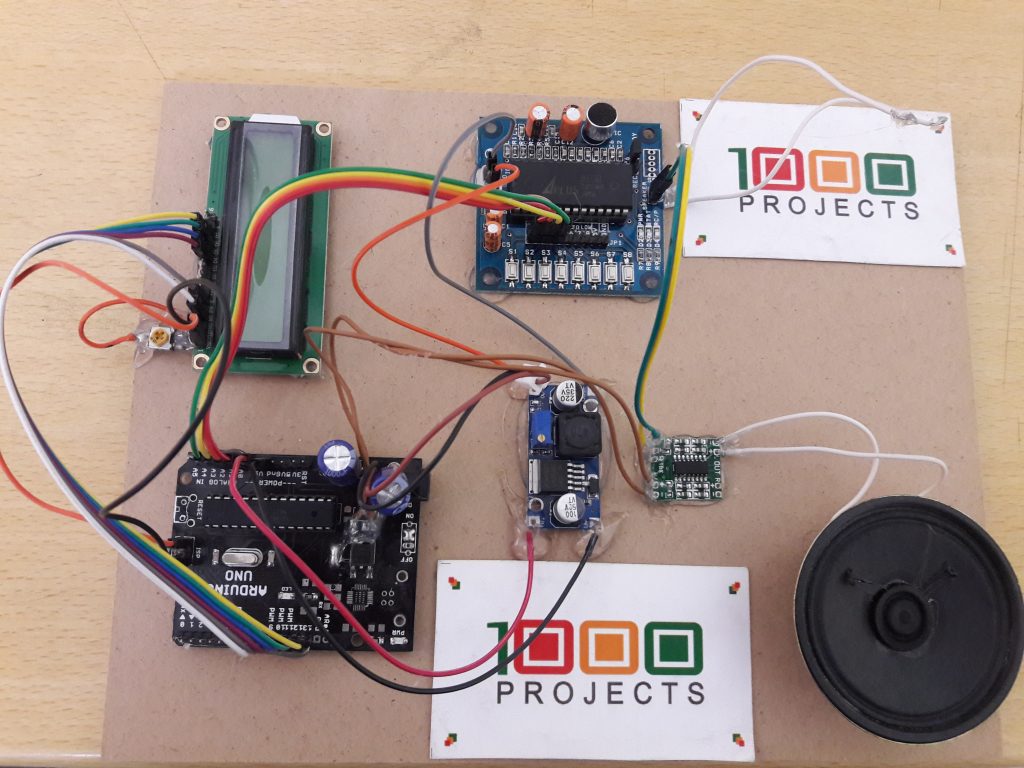

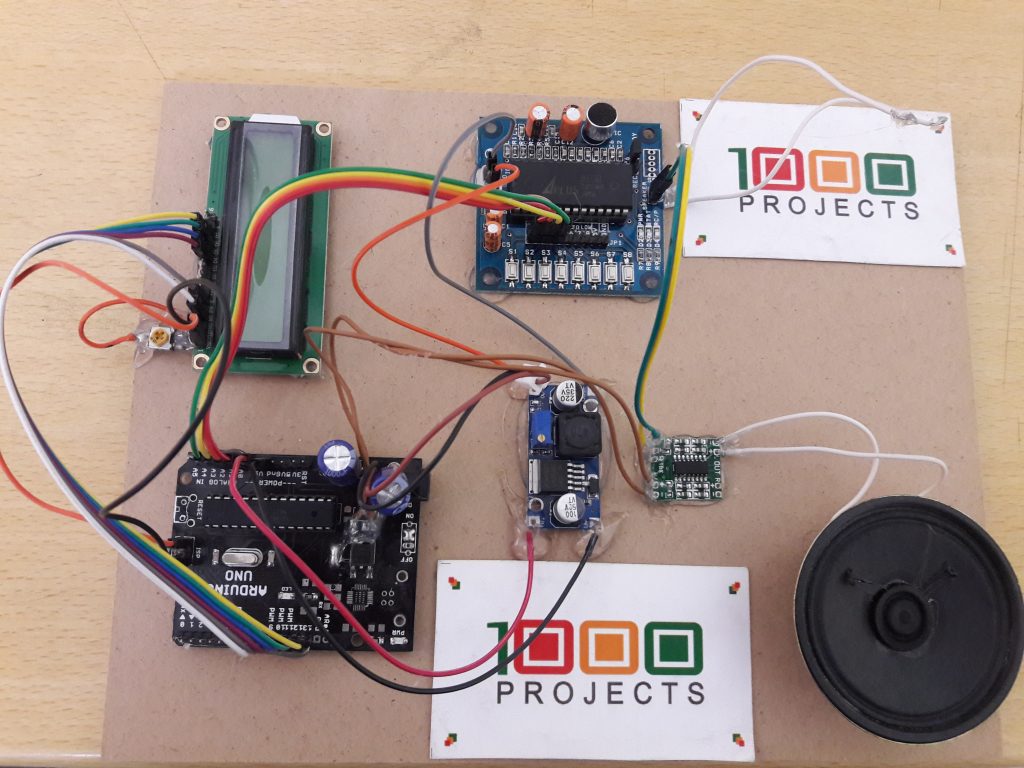

In this Smart Medicine Pill Reminder Project, we are using an Arduino board, APR33R3 Voice playback module connected to Amplifier and this connected to Speaker, we have an LCD also to display that.

the main intention before developing this project is to usually remind the medicines which have to be taken in the daily schedule and it is especially useful for old age people, patients, and busy people.

The below Video is the Working condition of the smart medicine pill reminder project.

This is how it reminds the message

Every after 10 seconds it will remind the message. Here we can adjust the time according to our convenience.

Here Aurdino is giving input to the APR33R3 and this gives input to the Amplifier and the amplifier gives the signal to the Speaker.

The output voice message can be visible on the LCD Screen also.

Smart Medicine Reminder For Elderly People Project:

The objectives of this project are to develop a prototype of a smart medicine reminder for elderly people that helps them consume the medicines right on time.

PROJECT BACKGROUND:

In recent times, the rate of consumption of medicines has highly increased due to the wide spreading of different diseases and illnesses across the globe. While some diseases are temporary, many diseases have a toll on human health for a lifetime. In the pursuit of maintaining a healthy lifestyle, we often find ourselves to be sick.

This could be threatening if not properly treated. A visit to the doctor and consumption of the medical prescription becomes a necessity. Nevertheless failing to consume the medicine regularly could cause a lot of problems. Keeping in mind this problem, the idea of creating a smart device that alerts the patient to take medicines right on time, so that they would recover soon and stay healthy without any issues in the body.

HARDWARE COMPONENTS USED:

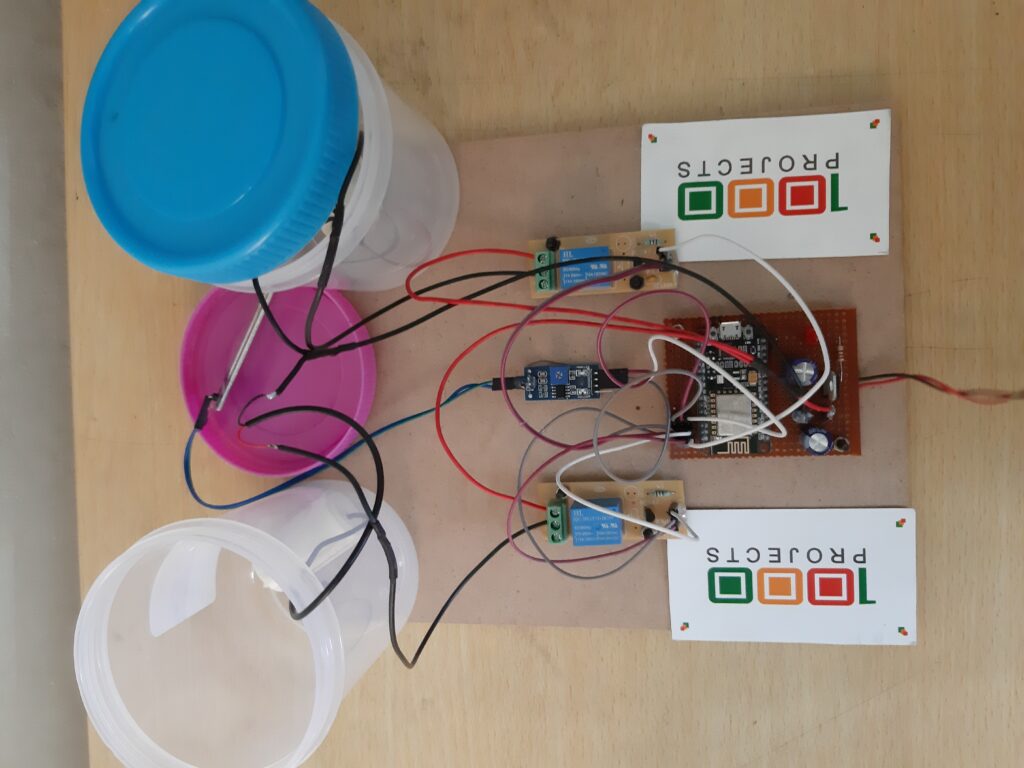

⚫ NODE-MCU

⚫ RTC MODULE

⚫ LED

⚫ JUMPER WIRES

SOFTWARES USED:

⚫ ARDUINO IDE

⚫ NODE-RED

⚫ MIT APP INVENTORHARDWARES

⚫ NODEMCU:

PROJECT HIGHLIGHTS

ADVANTAGES:

Cost-Efficient

Needs no prior technical knowledge

Sound reminder enables the user to take medicines at regular times.

Easy to maintain.

Provide comfort and health.

Reusable

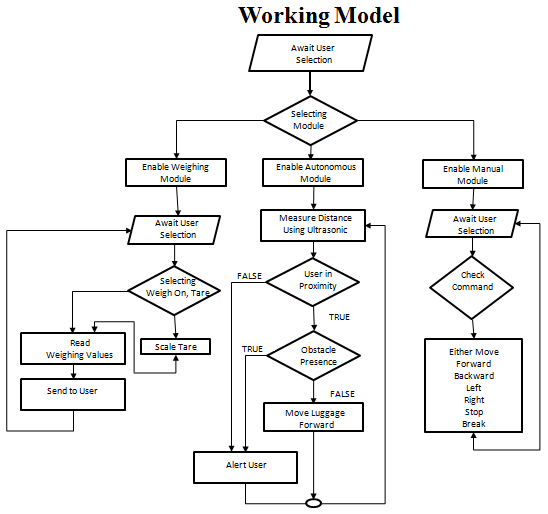

Working Procedure:

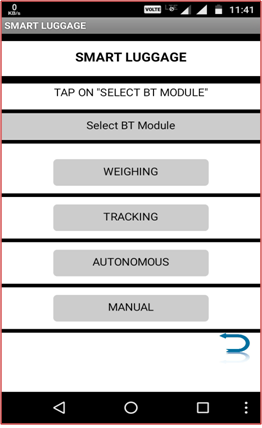

The project begins by setting the time in the app created by MIT App Inventor.

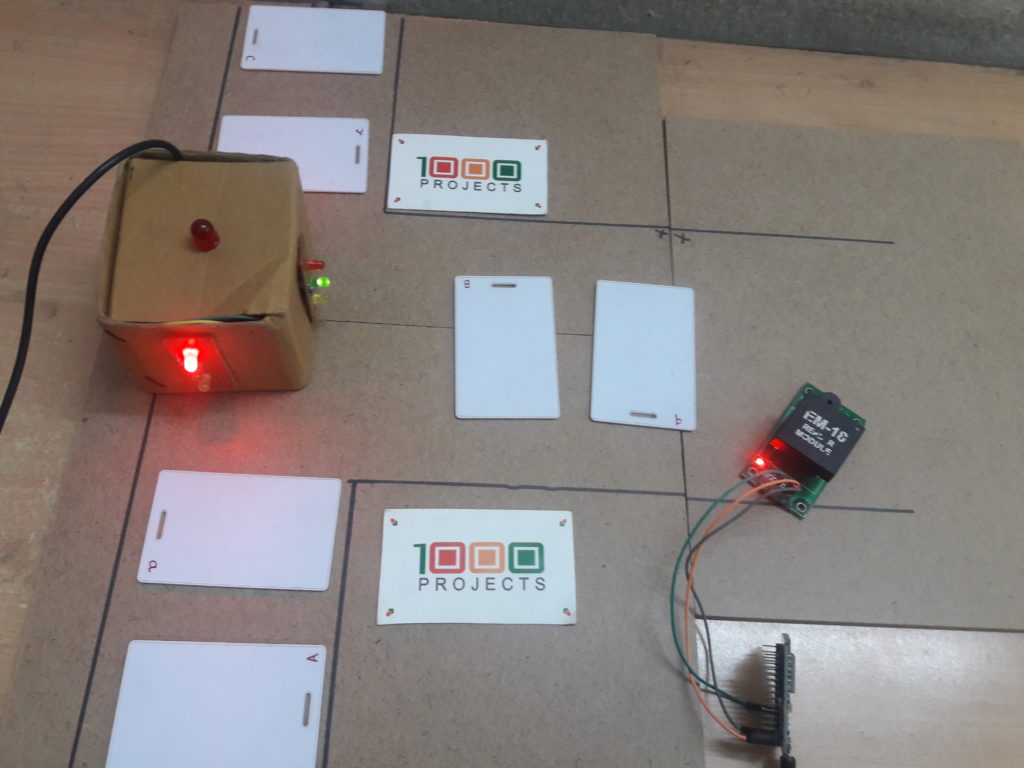

On pressing the first button “SET ALARM”, the user is taken to a new screen that enables them to set the specific alarms.

On pressing the second button “SELECT MEDICINES”, the user is navigated to a web page, with which the user can make online shopping for the medicines.

This is the screen that enables the user to set the timings for the alarm.

Alarm 1, Alarm 2, and Alarm 3 is a time picker that helps the user to select a time.

On clicking the button “Set Alarm”, the alarm is set and send to the NodeMcu.

On clicking BUTTON 2, the user is directed to an online pharmacy website, where the user can make the purchase of the necessary medicines with just a click of the button.

The RTC keeps ticking as the module is given the power supply.

When the alarm time matches with the real-time, the buzzer blows and the LED corresponding to the time of the day glows at an interval of 1 second.

In the same way, the alarms for afternoon and night also ring along with the glowing of the LED, indicating to the user that it’s time for medicine.

RESULTS AND FUTURE SCOPE

RESULT:

Our project’s objective is to help elderly people. We conclude the results that our project is useful for those elderly people who take pills regularly and whose course of prescription is very long and difficult to remember. This device will notify them at the right time, the right medicine to be taken. This in turn helps them to stay fit and healthy without any issues.

FUTURE SCOPE:

A button on the device, on pressed can send an alert call/text to the caregiver if the user is ill or needs help.

A button, when not pressed after the notification, sends a message to the user and the caregiver an alert prompt

“MEDICINE NOT TAKEN. PLEASE TAKE.”