Executive Summary

This Knowing Internet of Things Data: A Technology Review is a critical review of Internet of Things in the context of Big Data as a technology solution for business needs. It evaluates the potential exploitation of big data and its management in correlation to devices which are Internet of Things. The article begins with literature review of internet of things data, thereby defining it in academic context. It then analyzes big data usability in commercial or business economics context. It discusses and evaluates the application of Internet of Things Data which ensures there is value-addition to a Business. The main objective of this Knowing Internet of Things Data: A Technology Review is to communicate the business sense or the business intelligence in use of big data by an organization. The premise of paper, is that, Internet of things data is an emerging science with infinite solutions for organizations to exploit and build services, products or bridge ‘gaps’ in delivery of technology solutions. The possibilities of using big data for marketing, healthcare, personal safety, education and many other economic-technology solutions are discussed.

Introduction

Organizational decisions are increasingly being made from data generated by Internet of Things (IoT), apart from traditional inputs. IoT data is empowering organizations to manage assets, enhance and strengthen performances and build new business models. According to MacGillivray, C., Turner, V., & Lund, D. (2013) the number of IoT installations is expected to be more than 212 billion devices by 2020. Thus, management of data becomes a crucial aspect of IoT, since different types of objects interconnect and constantly interchange different types of information. The scale or volume of data generated and the processes in handling data are critical to IoT and requires the use several technologies and factors.

Addressable market area globally for IoT is estimated to be $1.3 trillion by 2019. New business opportunities are thus plenty, allowing organizations to become smarter and enhance their product, services and improve user/customer experience, thereby creating Quantified Economy. According to Angeles et al ( 2016) (1) Internet of Things spending is $669(2) smart homes connectivity spend $174 million (3) Connected cars by 2020 spend $220 million. The has led to companies revisiting their decisions (1) Are services or products of their organization capable to connect or transmit data (2) Are the organizations able to optimize value from the data they have (3) Are the connected devices at the organization able to provide end-to-end-view (4) Do organizations need to build IoT infrastructure or just parts of a solution to connect devices. Some examples of IoT and business value – (a) real estate holding company adopts smart buildings networking for ‘real-time’ power management and save substantially on expenses incurred in this sector (2) incorporating sensors in vehicles allows logistics companies to gain real-time input on environmental, behavioural factors that determine performance (3) Mining companies can monitor quality of air for safety measures and protecting miners.

Hence, the immediate results of IoT data are tangible and relate to various organizational fronts – optimize performance, lower risks, increase efficiencies. IoT data becomes the vital bridge for organizations to gain insight and strengthen core business, improve safety and leverage data for business intelligence, without having to become a data company itself. Organizations can continue to focus on their deliverables instead of the backend of generating value from data, by using several IoT data management, storage technologies offered by vendors competitively.

Algorithm Marketplaces

As big data enters the ‘industrial revolution’ stage, where machines based on social networks, sensor networks, ecommerce, web logs, call detail records, surveillance, genomics, internet text or documents generate data faster than people and grow exponentially with Moore’s Law, share analytic vendors. Therefore, virtual marketplaces where algorithms (code snippets) are purchased or sold is expected to commonplace by 2020. Gartner expects three vendors to dominate the market place and are all set to transform the software market of today, with analytics domination. Simply said, algorithm marketplace improves on the current app economy and are entire ‘’building blocks” which can be tailored to match end-point needs of the organization. (1) Granular software will be sold in more quantities, since software for just a function or a feature will be available at cheap prices. (2) Access to powerful, advanced, cutting-edge algorithms by inventors who earlier restricted their products in-house are now commercially made available, widening application scope and benefitting businesses. (3) reuse or recycling of algorithms is now optimized. (4) quality assessment optimized.

Model Factory

Data storage is cheap and hence can be mined for information generation. Technologies such as MPP (massively parallel processing) databases, distributed databases, cloud computing platforms, distributed file system, as well as scalable storage systems are in use. Using open source platforms such as Hadoop the data lake built can be developed to predict analytics by adopting a modelling factory principle. In this technology, of which there are several vendors, the data that an organization generates does not have to handled by data scientist but focus on asking right questions with relation to predictive models. The technology allows real automation to data science, where traditionally work was moved from one tool to the next, so that different data sets were generated and validated by models. The automation of such processing not only removes human error but also allows managing hundreds of models in real time. In model factories of the future, software will pre-manage data and scientists have to concentrate only on how to run models and not iterate their work. Model factories of the future are the Google and Facebook of today, but without the number crunching army of engineers but automated software to manage data science processing via tooling and pervasiveness of machine learning technologies. Examples include Skytree.

Edge analytics

Business environment creates unstructured databases which could exceed zettabytes and petabytes and demand specific treatment in terms of storage of processing and display. Hence, large data-crunching companies such as Facebook or Google cannot use conventional database analytic tools such as those offered by Oracle as big repositories require agile, robust platforms based on either distributed, cloud systems or open source systems such as Hadoop. These involve the use of massive data repositories and thousands of nodes which evolved from tools developed by Google Inc, like the MapReduce or File Systems or NoSQL. None of these are compliant with conventional database characteristics such as – atomicity, isolation, durability or consistency. Hence to overcome the challenge data scientists collect data, analyze it by using automated analytic computation on data at a sensor or the network switch or other device and does require that data is returned to data store for processing. Thus, by annotating and interpreting data, network resources mining of data acquired is possible.

Anomaly detection

Data Scientists use the outlier detection or anomaly detection process to identify instances or events which fall short of a template pattern of an item on a data set. In short, they are the set of data points which are different in many ways from the remainder of the data. These are used in credit card frauds, fault detection, telecommunication frauds, network intrusion detection. This also used statistical tools such as Grubbs’ test to detect outliers or univariate data (Tan, P. N., Steinbach, M., & Kumar, 2013).

Event streaming processing

ESP or Event Stream Processing is described as the set of technologies which are designed to aid the construction of an information system that are event-based. Thus, this technology include – event visualization, event databases, event driven middleware, event processing languages as well as complex event processing. Here data that is collected is immediately processed without a waiting period, and creates output instantaneously.

Text analytics

Text analytics refers to text data mining and uses text as the units for information generation and analysis. The quality of information derived from texts is optimal as patterns are devised and trends are used in the form of statistical pattern leaning. Unstructured text data is processed to form meaningful data for analysis so that customer opinions, feedback, product reviews are quantified. Some of the applications here are sentimental analysis, entity modelling support for decision making.

Data lakes

Data lakes are storage repositories of raw data in its native format. These are held in this state, until they are required. Such storage is done in a flat architectural format and contrasts with that ot data stored hierarchically in data warehouse stores. Data structures are defined only when the data is needed. Vendors include Microsoft Azure, apart from several open source options.

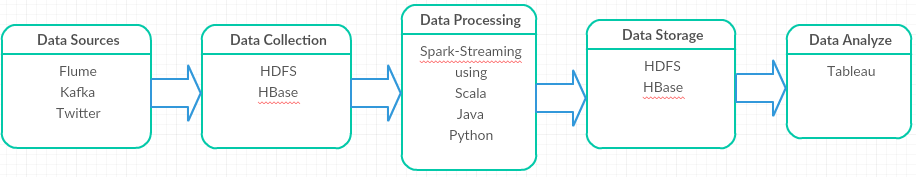

Spark

Spark is a key application of IOT data which simplifies real-time big data integration for advanced analytics and uses realtime cases for driving business innovation. Such platforms generate native code and needs to be further processed for Spark streaming.

Conclusion

According to Gartner as many as 43% of organizations are committed to invest and implement IoT, and is indicative of the massive scale of data the organizations will come to generate. Thus, utilities or fleet management or healthcare organizations, the use of IoT data will overturn their cost savings, operational infrastructure as well as asset utilization, apart from safety and risk mitigation and efficiency building capabilities. The right technologies deliver on the promise of big data analytics of IoT data repositories.

References

Angeles, R. (2016). STEADYSERV BEER: IOT-ENABLED PRODUCT MONITORING USING RFID. IADIS International Journal on Computer Science & Information Systems, 11(2).

Chen, H., Chiang, R. H., & Storey, V. C. (2012). Business intelligence and analytics: From big data to big impact. MIS quarterly, 36(4), 1165-1188.

Fredriksson, C. (2015, November). Knowledge management with Big Data Creating new possibilities for organizations. In The XXIVth Nordic Local Government Research Conference (NORKOM).

MacGillivray, C., Turner, V., & Lund, D. (2013). Worldwide Internet of Things (IoT) 2013–2020 Forecast: Billions of Things. Trillions of Dollars, Gartnet Market Analysis.

Tan, P. N., Steinbach, M., & Kumar, V. (2013). Data mining cluster analysis: basic concepts and algorithms. Introduction to data mining.

Troester, M. (2012). Big data meets big data analytics: Three key technologies for extracting real-time business value from the big data that threatens to overwhelm traditional computing architectures. SAS Institute. SAS Institute Inc. White Paper.